The New Moral Panic

- Martyna Wiśniewska

- 6 hours ago

- 15 min read

Why Age-Verification Laws Will Not Fix Social Media

“The telephone mania is among the latest negative results of modern inventions, and those who are the victims are objects of dread to their fellow citizens, although they themselves frequently live in a blissful ignorance of their affection.”

One may think this is a professional diagnosis of the current worldwide spread of smartphone addiction among teenagers. However, this quote comes from the “New-York Tribune” newspaper issued in 1897 and expresses concerns about landline phone usage. “Talk-Timer Designed for Telephone Addicts” is another headline from that era. The moral panic over new popular inventions, especially those used by teenagers, is a long time sustained phenomena. “Children who read too much”, screamed headlines on the front pages in 1900 or “Don’t read in Bed!” In the 1940s, watching TV was compared by psychiatrists to using drugs, while a decade later comic books earned the title of “Evil Communications”. Oddly enough, negative press also came for bicycles and teddy bears. When the bicycle debuted in the 1800s, it was blamed for all sorts of problems: from turning people insane, to devastating local economies, to destroying women’s morals. Adding to that, in 1907, the “Davenport Weekly Democrat and Leader” issued an interview with a priest about the cruel nature of teddy bears. The teddy bear was accused by the priest of destroying all instincts of motherhood in girls, and in the future would be recognized as one of the most powerful factors in the rise of suicide. Instead of teddy bears, dolls, the priest said, were good and helped to implant in little girls the instincts which some day would make them long for children. Although these examples may seem exaggerated in their nature, they are not completely unrelated. We know now that reading is beneficial, TV is not as addictive as previously presumed and bicycles don't drive people insane. People periodically sparking moral panics regarding new inventions is nothing new.

In the last few years, more and more countries have been introducing age verification laws to ultimately ban kids and teenagers from accessing social media. The core issue is the presumed mental health crisis induced by social media. These laws not only remain deeply ineffective and harm user privacy, but they are also not rooted in the scientific consensus regarding “social media addiction”. Thus, the question is: are we witnessing another moral panic regarding social media use?

Is Social Media really addictive?

The main objectives of introducing age verification laws are

Protection of minors (preventing children from accessing inappropriate or harmful content such as pornography, gambling, content related to mind-altering substances)

Online safety (reducing minors’ exposure to online predators, exploitation, and grooming)

Mental health protection (limiting exposure to content that may negatively impact young people’s mental health such as self-harm or extreme violence)

The first misconception about social media is that it is in itself addictive. Social media addiction is a behavioral addiction. Most research has found that these behaviors are present in only a minority of social media users, from 3.42% in a study of US 18-25 year olds to 4.5% in a study of in the Netherlands and 9.4% in Finnish adolescents. Therefore, commonly used comparisons of addictive substances of social media to drugs, alcohol or nicotine are unfounded. About 25% of people who use illicit drugs develop an addiction, and more than 70% of people who have tried drugs before the age of 13 are addicted to alcohol or drugs. The percentage of users addicted to social media does not come close. Yet, Almost half of teenagers in Britain say they feel addicted to social media. The term "doomscrolling" has confidently entered modern nomenclature, yet recent studies prove that users do not scroll social media feeds because of addiction, but out of habit. Moreover, studies suggest that social media users greatly overestimate their addiction to these platforms. Additionally, the negative framing and moral panic surrounding technology only worsens harmful behavior. Even brief exposure to narratives framing social media as addictive have measurable psychological effects. Research shows that just two minutes of exposure to addiction-focused messaging is enough to produce a statistically significant negative impact on users. Continued exposure to broader media narratives portraying social media as addictive can amplify these effects even further. Rather than empowering individuals to regain control over their online behavior, the addiction label may actually undermine it by reducing users’ sense of control, increasing self-blame, and making their overall experience with social media more negative. Finally, frequent use of the phrase “social media addiction” encourages people to believe that the term “addiction” is interchangeable with heavy social media use.

Social Media and Mental Health: Correlation Is Not Enough

The second fallacy is that social media is the cause of mental health crises amongst youth. It is majorly driven by the correlation between these two.

However, what graphs like this one often overlook are the COVID-19 pandemic and subsequent worldwide lockdowns, economics, and environmental instability. Evidence from empirical research also suggests that broader social conditions may play a more significant role than technology itself. For instance, a 2024 study examining 620 adolescents aged 12–17 analyzed the relationship between screen time, socioeconomic status, and symptoms of depression, anxiety, and stress. The findings showed that screen time had only a weak correlation with mental health problems. In contrast, socioeconomic status was strongly associated with levels of depression and stress. Adolescents from lower socioeconomic backgrounds reported significantly higher levels of psychological distress even when their screen time was similar to that of their peers. These results suggest that social and economic conditions may be more influential predictors of youth mental health than technology use alone. In summary, although there are many studies between social media usage and mental health problems, the results are not ambiguous and do not confirm the causality of that phenomena.

The Positive Side Policymakers Ignore

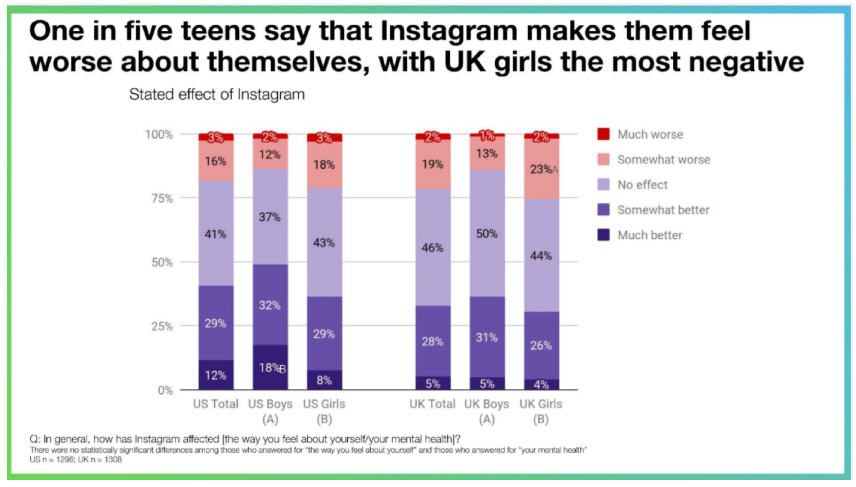

Many proponents of the arguments against social media usage are missing a potentially positive impact. The following chart from an internal Facebook research report published in 2019 illustrates an important divergence. It has been referenced by lawmakers around the globe in support of anti-social media policies. It’s easy to see why this chart caught lawmakers’ attention. The headline is chilling: Instagram makes 20% of teens feel worse about themselves. The chart indicates that Instagram usage is distressing for some minor part of teenage girls. At the same time, the chart indicates that over 40% of U.S. teens say that Instagram made them feel better about themselves; more than twice as many as the U.S. teens reporting that Instagram makes them feel worse. Even with respect to U.S. girls, 37% say Instagram made them feel better about themselves compared to 21% who say it made them feel worse.

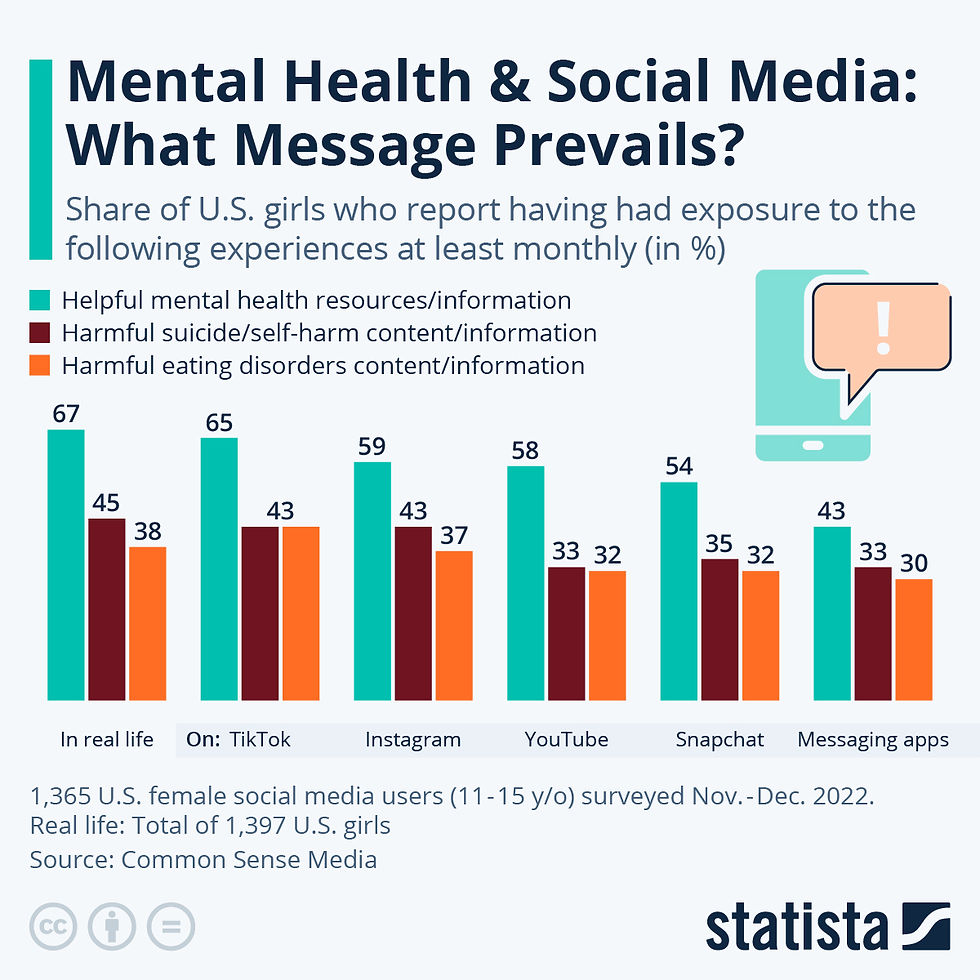

Similar results were shown a few years later in a 2022 survey conducted on girls aged 11-15, where again, the majority of users reported exposure to helpful, rather than harmful content across platforms.

The Myth of the Anonymous Online Predator

Another common justification for restricting minors’ access to social media is the prevention of online sexual abuse. Public debate often frames the issue as one of anonymous “internet predators” targeting children through social media platforms. However, empirical research paints a far more complex picture.

Researchers use the term “online sexual abuse” broadly to include grooming, sexual solicitation, and image-based abuse such as sextortion. Yet, studies consistently show that perpetrators of such acts are rarely strangers. A recent meta-analysis of 32 studies conducted by the Crimes Against Children Research Center found that 68% of offenders were acquaintances of the victim and 44% were themselves under the age of 18. Rather than targeting children randomly online, many perpetrators already knew the victim offline and used digital communication to maintain or deepen existing offline relationships.

This pattern is also visible in cases of sextortion. While the public often imagines coercive crimes committed by anonymous online predators, research suggests that most incidents resemble forms of intimate partner abuse or peer exploitation. One study found that 60% of minors experiencing sextortion knew the perpetrator in real life, often as a current or former romantic partner, and 75% had voluntarily shared the images before the abuse occurred. Nationally representative surveys of American teenagers similarly show that only about 4-6% of victims were targeted by someone they barely knew online.

In other words, the stereotype of the unknown predator abducting children after a chance encounter on social media is extremely rare. Most cases involve existing relationships that extend into digital spaces. As a result, restricting access to social media platforms would do little to address the underlying dynamics of these crimes, which are more closely related to peer relationships, dating violence, and cyberbullying than to anonymous online predation.

The Global Push for Age-Verification Laws

Although the moral rationale for introducing social media bans is often framed around protecting children’s mental health, concerns about data privacy and the practical consequences of enforcement have increasingly become central to the debate.

Australia provides the most radical example of this regulatory trend. In 2024, the Australian government passed amendments to the Online Safety Act, introducing a minimum age of 16 for social media accounts. The law requires platforms such as Instagram, TikTok, and Snapchat to take “reasonable steps” to prevent users under 16 from creating or maintaining accounts, with penalties reaching nearly 50 million Australian dollars for non-compliance. The policy came into effect in December 2025 and is widely described as the first nationwide social media ban for minors.

At the same time, recent data suggests that teenagers themselves are increasingly aware of how they use social media and what it represents. As shown in figure below, Australian teens in particular report a sharp increase not only in treating social media as a controlled, personal space, but also in the positive emotional value they derive from it. A significant rise in respondents agreeing that social media “makes me feel good about myself” indicates that, for many young users, these platforms are not solely a source of harm but also of validation and self-expression. Compared to their global peers, Australian teens are also more likely to say that they “post everything they do,” “say what they really think,” and “care what people think about their profiles,” reinforcing the idea that social media plays a central role in shaping their identity and wellbeing. This highlights a key tension: while policymakers frame restrictions as protective, young users increasingly experience social media as both empowering and emotionally beneficial.

The United Kingdom has taken a different approach. Instead of implementing a blanket ban, the UK adopted the Online Safety Act, which focuses on platform responsibility and mandatory age verification for potentially harmful content. Platforms are required to introduce “highly effective age checks,” including tools such as ID verification, credit-card validation, or facial age estimation to prevent minors from accessing certain types of content. At the same time, however, proposals for a full social media ban for teenagers below the age of 16 have repeatedly faced political resistance. In 2026, British MPs rejected an amendment that would have introduced such a ban, arguing that it could push young users toward less regulated spaces online.

In the United States, the regulatory landscape is fragmented and largely driven by state-level initiatives. States such as Utah and Arkansas have attempted to introduce laws requiring age verification and parental consent for minors using social media. These regulations would have forced platforms to verify the age of all users and restrict certain features for teenagers. However, several of these laws have faced significant legal challenges. Courts have blocked some measures on constitutional grounds, arguing that broad restrictions on online platforms may violate free speech protections under the First Amendment.

Taken together, these cases illustrate the growing political momentum behind age-based restrictions on social media. At the same time, they also reveal significant legal, technological, and privacy challenges associated with implementing such policies. Mandatory age verification systems often require sensitive personal data, while enforcement difficulties and constitutional concerns raise doubts about whether blanket social media bans can effectively address the problems they are intended to solve.

Privacy Risks, Data Breaches and Hidden Costs

In addition to legal and technical challenges, proposals to enforce age verification and social media bans create real privacy and data security risks. Age checks typically require users to upload highly sensitive personal information, such as images of government‑issued ID documents or face scans, which must then be stored, processed, and sometimes shared with third‑party verification providers. This increases the amount of personally identifiable information (PII) vulnerable to exposure.

The dangers are not hypothetical. In July 2025, the popular dating safety app Tea suffered a major data breach that exposed approximately 72,000 user images, including selfies and government ID photos submitted for account verification, which were supposed to be deleted after review. The exposed images and private messages were later published on internet forums such as 4chan, illustrating how easily sensitive verification data can be leaked when stored by platforms or verification services.

Similarly, age‑verification processes on other platforms have already led to related security incidents. In late 2025, it was reported that the government ID photos belonging to users of internet messaging platform Discord submitted as part of an age appeal process were exposed due to a breach of a third‑party customer support system. Tens of thousands of users may have had their identification images accessed, further underscoring the privacy risks inherent in storing verification data.

Both of these companies were forced to introduce age-verification in response to UK and Australian laws. These examples highlight the core paradox of current age verification policy proposals: mandating the collection of more sensitive personal data in the name of safety may actually make users, especially minors, more vulnerable to privacy breaches and exploitation. What is intended to protect can inadvertently expose.

But even if age verification mechanisms themselves were poorly designed, inappropriate, or ultimately abandoned, platforms are already engaging in precautionary self‑censorship with real consequences for free expression and user support networks. Regulatory pressure, particularly in the UK under the Online Safety Act, has pushed companies to over‑filter content far beyond pornographic or clearly harmful material.

In practice, this has meant that platforms like Reddit and others have aggressively moderated or removed content that does not involve explicit harm but could possibly be interpreted as adult or boundary‑challenging under ambiguous safety criteria. For example:

On Reddit, multiple forums dedicated to LGBTQ+ support and community discussion were restricted or hidden after automated moderation tools flagged them as adult‑oriented, even though their primary purpose was peer support and identity affirmation. Users reported that subreddits focusing on queer youth, coming‑out stories, and community advice were harder to find or subject to shadow‑banning because moderation systems could not reliably distinguish between supportive material and adult content.

Similarly, forums where minors and families sought help with serious issues, such as domestic abuse, mental health support, and family conflict, were sometimes removed or suppressed by overly broad content filters. Moderators and researchers documented cases where discussions about “how to get help when you’re a teenager living with an abusive caregiver “were interpreted as involving sexual or adult content, triggering automatic takedowns.

What unites these examples is ambiguity in the definition of “harmful content” and the incentives created by strict enforcement regimes: when platforms can be fined or sanctioned for hosting “adult or harmful content,” they often choose to restrict borderline content entirely rather than evaluate context. Support groups, educational discussions, and peer‑to‑peer assistance, especially around LGBTQ+ topics or abuse recovery, are indistinguishable by AI-driven filters from content that is explicitly sexual or inappropriate.

Ultimately, this kind of self‑censorship does not protect young users, but denies them access to supportive communities, critical life information, and safe spaces for expression. Far from empowering users, it reinforces isolation and diminishes the individual.

Why Age Verification Will Not Work

Given recent scientific knowledge, it is known that social media usage is a habit. Habits are formed, may be changed and adapted. Hence, prohibiting social media will not teach teenagers to form healthy or safe relationships with technology. The technological reality is simply not possible to change. The only rational way to deal with any downsides is through sustained education.

The fact that social media is and always was permitted for users above the age of 13 should not be omitted. It is primarily parents who allow children to engage in social activity. They should also not be totally blamed, however, since they were the first to witness this powerful technology enter common life and therefore may not have been completely prepared. Many parents, though, still request platforms to enable some form of parental control over children's online activities. This idea does not unfortunately account for the even more complicated nature of such mechanisms than "traditional" age verification, since platforms would have to not only check for the age of users, but also their legal relationships with parents and caregivers.

Current age verification mechanisms require users to upload an ID document to access the website. Although there have been attempts to verify age via photos and face scans, it was found out such systems could be subverted with AI generated images. As far as history shows, the safety of this data is murky. In 2015, the extramarital affair website of Ashley Madison was hacked and user details were publicly released, exposing 37 million users. One year later, details of 63 million users of a major webcam pornography website were stolen. Even if only adult content providers were to implement age verification, their platforms and age verification vendors would become lucrative targets for cyber criminals and hacking groups.

Moreover, the rise in use of virtual private networks, or VPNs, is a generally proven variable that jeopardizes effectiveness of such policies. According to VPNmentor, VPN demand in Utah skyrocketed by 967% following the state’s ban in 2023. Additionally, ExpressVPN provided data to Newsweek showing increased usage in the weeks following similar bans in other US states. This is not just an American phenomenon. In Thailand, where all adult websites were banned in 2020, one VPN vendor cited a 644% surge in installs.

Finally, age verification tech creates a substantial, profitable market, with significant revenue growth predicted for third party verification providers. It also introduces significant business costs for online services, hitting startups and small businesses. While compliant sites might lose traffic, users shift to non-compliant platforms or use VPNs to create new markets for circumvention tools, which disrupt existing revenue models for the online content economy. The barriers to entry caused by age verification firewalls will hit online providers. It is said that a website load time of 3 seconds is a critical threshold where abandonment rates begin to skyrocket, with roughly 40% to 53% of users abandoning a site if it takes longer to load. Mobile users are even less patient, often abandoning sites that take more than 3 seconds to load.

Beyond The Moral Panic

Contrary to popular belief, age verification will not reduce the negative effects of social media platforms. Instead, it will provide them with large amounts of user data. The age verification mechanisms will not only impact kids and teenagers, but also adults, who will be required to upload their sensitive data online. Adults have the right to privacy and anonymity online and such laws violate this principle and threaten diversity of opinion.

Furthermore, there is no scientific consensus in the first place regarding if social media use causes the current mental health crisis. Policy makers need to dive deeper into addressing the root cause of mental health issues. Social media usage is a habit that is formed over time, therefore countries should focus on introducing online education curriculums into their schools, so kids can learn online etiquette, safe browsing and critical thinking from an early age. The current moral panic around social media and teenagers may bring more harm not only to teens but society as a whole. As of now, the accelerated attempts of some countries to introduce social media bans only delivered the evidence for the ineffectiveness of such measures. Therefore, this moral panic needs to be reevaluated, before it destroys data privacy and brings major self-censorship of online spaces.

What may be comforting for many is that teenagers in the 21st century use smartphones primarily for the same reason teens in the 19th century used telephones: to communicate with peers. Teenagers increasingly prioritize direct messaging over other aspects of social media. While Instagram is a dominant app for direct messaging, teens also use platforms like WhatsApp, Snapchat, and Discord for rapid, intimate, and often private communication with friends, rather than public broadcasting. X, for instance, remains one of the least used social media apps.

Ultimately, the moral panic surrounding new technologies can greatly distort public perception and lead to ineffective policy responses. Instead of reacting to perceived technological threats, policymakers should focus on evidence-based solutions that support digital literacy, protect privacy, and address the broader social factors affecting young people’s well-being.

Bibliography

(1900, March 16) Children Read Too Much. The Kansas State Register.https://www.newspapers.com/article/the-kansas-state-register/61943132/

(1907, July 11). Davenport Weekly Democrat and Leader.https://www.newspapers.com/article/davenport-weekly-democrat-and-leader/38537623/

(1955, September). The Observer.https://www.newspapers.com/article/the-observer/29899369/

(1958, December 14). Hartford Courant.https://www.newspapers.com/article/hartford-courant/73689670/

(1977, September 6). TV, drug addiction similar: psychiatrist. Chicago Tribune.https://www.newspapers.com/article/chicago-tribune/39478896/

(1987, April 4). The New York Tribune.https://www.newspapers.com/article/new-york-tribune/34746971/

(2024, February 22). More than just a number: Costs and business impacts on startups of determining user age. Medium.https://engineadvocacyfoundation.medium.com/more-than-just-a-number-costs-and-business-impacts-on-startups-of-determining-user-age-ceabe03d40b1

An D., Meenan P. (2016, July). Why Marketers Should Care About Mobile Page Speed. Google/SOASTA.https://www.thinkwithgoogle.com/_qs/documents/2698/53dff_Why-Marketers-Should-Care-About-Mobile-Page-Speed-EN.pdf

Anderson I. A., Wood W. (2025, November 27). Overestimates of social media addiction are common but costly. Nature.https://www.nature.com/articles/s41598-025-27053-2

Edmonds L., Vlamis K. (2024, December 13). Teens aren't that into X — but another social media platform is increasingly getting their attention. Business Insider.https://www.businessinsider.com/x-lesspopular-with-teens-more-use-whatsapp-meta-2024-12

Goldberg Z. (2025, April 3). Mental-Health Trends and the “Great Awokening”. Manhattan Institute.https://manhattan.institute/article/mental-health-trends-and-the-great-awokening

Goldman E. (2025). “The segregate and suppress” approach to regulating Child Safety Online. Stanford Technology Law Review.https://law.stanford.edu/wp-content/uploads/2025/07/Segregate-and-Suppress.pdf

Marwick, A., Smith, J., Caplan, R., & Wadhawan, M. (2024). Child Online Safety Legislation (COSL) - A Primer. The Bulletin of Technology & Public Life.https://citap.pubpub.org/pub/cosl/release/5

Merelle S. Y. M., Kleiboer A. M., Schotanus M., Cluitmans T. L. M., Waardenburg C. M., Kramer D., Mheen D., Rooij A. (2017). Which Health-Related Problems Are Associated with Problematic

Video-Gaming or Social Media Use in Adolescents? Clinical Neuropsychiatry, 14(1), 11–19.https://research.tilburguniversity.edu/en/publications/which-health-related-problems-are-associated-with-problematic-vid/

Moreno M. (2022, January 27). Measuring Problematic Internet Use, Internet Gaming Disorder, and Social Media Addiction in Young Adults: Cross-Sectional Survey Study. JMIR Public Health and Surveillance, 8(1).https://publichealth.jmir.org/2022/1/e27719

Osmond C. (2025, October 9). ID photos of 70,000 users may have been leaked, Discord says. BBC.https://www.bbc.com/news/articles/c8jmzd972leo

Paakkari L., Tynjälä J., Lahti H., Ojala K., Lyyra N. (2021, January). Problematic Social Media Use and Health among Adolescents. International Journal of Environmental Research and Public Health, 18(4).https://www.mdpi.com/1660-4601/18/4/1885#Discussion

Prescod M. (2024, October 2). Why Online Age Verification will give us the worst of both worlds. IEA.https://iea.org.uk/why-online-age-verification-will-give-us-the-worst-of-both-worlds/

Sim K. (2025, August 7). The Online Safety Act Has Nothing to Do With Child Safety and Everything to Do With Censorship. Novara Media.https://novaramedia.com/2025/08/07/the-online-safety-act-has-nothing-to-do-with-child-safety-and-everything-to-do-with-censorship/

Visier-Alfonso M. E., López-Gil J. F., Mesas A. E., Jiménez-López E., Cekrezi S., Martínez-Vizcaíno V. (2024, November 7). Does Socioeconomic Status Moderate the Association Between

Screen Time, Mobile Phone Use, Social Networks, Messaging Applications, and Mental Health Among Adolescents? Cyberpsychology, Behavior, and Social Networking.https://pubmed.ncbi.nlm.nih.gov/39469773/

Wakefield J. (2025, August 23). My ex stalked me, so I joined a 'dating safety' app. Then my address was leaked. BBC.https://www.bbc.com/news/articles/ce87rer52k3o

Whittaker R. (2025, November 28). Instagram users are scrolling out of habit not addiction, study finds. Independent.https://www.independent.co.uk/news/health/instagram-addiction-scrolling-reels-b2874096.html

Yerby N. Addiction Statistics. Addiction Centre.https://www.addictioncenter.com/addiction/addiction-statistics/

Comments